Deepities and AI: Navigating the Landscape of Pseudo-Profundity

The concept of “deepity” has gained considerable attention in philosophical and intellectual circles. Coined by philosopher Daniel Dennett, a deepity is a statement that appears profound but is actually trivial or meaningless upon closer examination. Deepities are increasingly prevalent in modern discourse, especially in discussions about cutting-edge technologies like artificial intelligence (AI). The rapid development and integration of AI into various aspects of society has given rise to numerous deepities, highlighting the need for critical thinking and scrutiny to distinguish genuine insights from superficial claims.

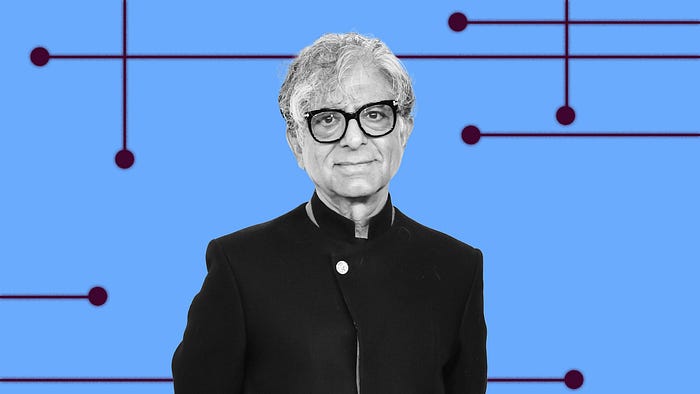

An example of deepity can be found in the works of Deepak Chopra, a well-known New Age author and speaker. Chopra’s writings and speeches are often criticized for their blend of scientific terminology and spiritual language, which frequently results in statements that seem profound but are actually devoid of substantive meaning. The “Wisdom of Chopra” website (http://wisdomofchopra.com) humorously illustrates this phenomenon by generating random “Chopra-esque” deepities using a basic algorithm. This site showcases how easily complex-sounding but ultimately meaningless phrases can be created, emphasizing the importance of critically evaluating such statements rather than accepting them at face value.

As artificial intelligence continues to captivate the public imagination, it has also become a breeding ground for deepities. Many self-proclaimed AI “experts” and thought leaders make grandiose claims about the potential of AI, frequently resorting to vague or misleading language. This can create the illusion of profound technical insight, causing non-experts to feel as though they have missed crucial information or to experience imposter syndrome. Although the AI field is relatively straightforward, some individuals portray it as an overly complex and challenging discipline. This portrayal does not reflect the reality for those not working at the core teams of leading technology companies (like Google, OpenAI, or Anthropic). It is essential to approach such declarations with a critical eye, distinguishing between genuine advancements and superficial rhetoric. By doing so, we can foster a more accurate and nuanced understanding of AI, appreciating its true potential and limitations.

Examples of AI Deepities:

- The term “prompt engineer” has been humorously critiqued by comedian Jon Stewart on his show. He compiled videos of people using this grandiose title and proposed a more accurate alternative: “Types Question Guy.” Stewart’s satire highlighted the absurdity of the term, suggesting that it merely describes someone who types questions into an AI system.

- Discussions around AI and consciousness often give rise to deepities. Consciousness itself is a complex topic, and our best understanding is limited to how we can suppress it, such as with anesthesia. Philosopher Roger Penrose argues that consciousness arises from quantum processes in the brain that cannot be explained by classical physics or computations (Penrose, 1994). However, many people misunderstand that when we talk about Artificial Neural Networks, we are using an analogy or inspiration from the brain, not replicating its exact functions. Even those lacking deep scientific knowledge often make deepities on this subject.

- The hype around Artificial General Intelligence (AGI) often stems from misunderstandings about what AGI truly entails. Unlike specialized AI systems, AGI refers to machines with the ability to understand, learn, and apply intelligence across a wide range of tasks, much like a human. However, there is no universally accepted definition of AGI, leading to varied interpretations and conceptualizations of intelligence and creativity. Misunderstandings about Large Language Models (LLMs) like ChatGPT significantly contribute to AGI hype, as these models are sometimes incorrectly seen as early versions of AGI. Whether intentional or not, such misconceptions fuel exaggerated claims and speculative narratives, attracting investment and attention. Recognizing this distinction is crucial for appreciating the current limitations and future potential of AI technologies.

In his article “The False Promise of ChatGPT,” renowned linguist Noam Chomsky criticized the AI’s limitations, stating, “Given the amorality, faux science, and linguistic incompetence of these systems, we can only laugh or cry at their popularity” (Chomsky, 2023). Despite such critiques, many so-called “AI experts” have yet to fully grasp the limitations of LLMs. As a result, the public may continue to encounter inflated expectations about the capabilities of these systems. Understanding these nuances helps in separating genuine advancements in AI from overhyped claims, enabling a more realistic view of AI’s potential and its current state.

AI deepities often rely on several common fallacies and rhetorical devices. These include using vague or poorly defined terms, making bold claims without evidence, appealing to emotions rather than reason, and conflating scientific concepts with spiritual, metaphysical ideas, pseudoscience, and malpractice. Unpacking these deepities reveals that they often lack substantive or predictive power. This scrutiny helps clarify the actual capabilities and limitations of AI technologies, allowing for a more grounded and accurate understanding of their impact and potential.

AI deepities spread misinformation, distorting public understanding and leading to misguided policies or investments. They create false certainty about AI’s future, discouraging critical thinking and resulting in misallocated resources and support for ineffective initiatives. To counter this, it’s crucial to promote clear language and evidence-based reasoning. By grounding discussions in reality, we can better navigate AI’s challenges and opportunities, ensuring advancements benefit society and avoid overhype or misunderstanding.